Last week’s “Losing the Loop: Iteratively Autonomous Artificial Intelligence and the Question of Human Operational Involvement” examined how increasing autonomy in agentic AI reshapes the structure, tempo, and locus of human decision-making in operational environments, particularly as these systems transition from analytic tools to increasingly directive and generative components of the human–machine team. Seen in sequence, the logic is cumulative. The first section demonstrates how autonomy can erode the integrity of the decision loop. The second makes that erosion visible, locating the specific points at which human judgment becomes constrained or displaced. Together, they clarify that the problem is not simply technological advancement, but the unstructured adaptation of human roles within that advancement. This distinction is critical. If the loss of meaningful engagement is produced through identifiable mechanisms, then it can also be mitigated through deliberate design. The question, therefore, shifts from whether humans remain involved to how that involvement is defined, enforced, and sustained. For purposes of this analysis, meaningful human involvement refers to the sustained ability to understand, allow, challenge, and/or override system outputs at the point of decision or through upstream design authority.

We opine that such involvement must be active, informed, and sustained across all levels of autonomy. More specifically, it requires the ability to interrogate system outputs, understand how recommendations are generated, and apply judgment in context. While increased autonomy can reduce certain forms of human error and cognitive overload, these benefits do not eliminate the risks associated with degraded oversight and compressed judgment.

At assistive levels, this means rigorous analysis. Operators must evaluate outputs rather than accept them at face value. At supervisory levels, engagement must be enforced through structured intervention and deliberate review. Passive monitoring is insufficient. At higher levels of autonomy, engagement must be built into system design and governance, ensuring that human responsibility remains intact even as execution accelerates.

To sustain this approach, institutional design becomes critical. Training must develop both technical fluency and cognitive discipline. Operators must understand how AI shapes perception and decision-making. At the same time, organizations must reinforce the expectation that human judgment defines command authority under all conditions.

To make these dynamics explicit, Table 1 maps the relationship between levels of autonomy, forms of human engagement, and associated risks. The chart is not decorative. It is a practical tool that shows where judgment compresses, where authority diffuses, and where intervention becomes necessary.

Table 1. Escalating Autonomy and Compression of Human Judgment in the Decision Loop

| Level of Autonomy |

System Function |

Human Role |

Cognitive Effect |

Operational Risk |

Required From of Engagement |

| Level 1: Assistive AI |

Data aggregation, pattern recognition, recommendation generation |

Direct decision-maker |

Augmented cognition with retained analytical control |

Overconfidence in data-driven outputs |

Active interpretation and validation of outputs |

| Level 2: Advisory AI |

Prioritization of options, predictive modeling, course-of-action shaping |

Primary authority with structured reliance on AI |

Framing effects begin to shape perception before deliberation |

Narrowing of decision space and early-stage bias |

Critical interrogation of assumptions and model logic |

| Level 3: Supervisory Autonomy |

Execution of constrained tasks, dynamic adjustment within parameters |

Oversight and intervention authority |

Cognitive offloading and reduced depth of engagement |

Passive monitoring and normalization of machine-driven action |

Structured intervention points and enforced review cycles |

| Level 4: Conditional Autonomy |

Independent selection and execution within defined mission sets |

Upstream authority in design and authorization |

Perceived reliability drives deference to system outputs |

Diffusion of responsibility across system lifecycle |

Embedded ethical constraints and accountability architecture |

| Level 5: High Autonomy Systems |

Adaptive, self-directed action across complex environments |

Strategic oversight removed from moment of execution |

Human judgment displaced from point of action |

Attribution gaps, escalation risks, loss of command clarity |

Instituional control, pre-deployment validation, and doctrinal limits |

The progression shown here captures the core tension. As autonomy increases, human judgment does not disappear. Instead, it shifts. At lower levels, authority is immediate. At higher levels, it moves into system design and policy. What changes most is the loss of deliberation at the point of action. This matters because decision space becomes increasingly shaped before humans engage. At intermediate levels, operators work within machine-curated options. At higher levels, actions unfold within pre-set constraints. In both cases, human influence is reduced at the moment it matters most. Early deployments of AI-enabled decision support systems in intelligence, surveillance, and reconnaissance (ISR) workflows have already demonstrated how machine-curated options can narrow perceived decision space before any human review even commences.

At the same time, friction continues to erode. Traditionally, friction forced pause, reflection, and accountability. AI reduces that friction. It accelerates tempo, simplifies choices, and enables faster execution. As a result, speed becomes the dominant factor, while judgment becomes compressed. Importantly, risk does not increase simply because autonomy increases. Risk increases when human engagement weakens. Passive oversight and diffuse responsibility create the most dangerous conditions. This is where command begins to fragment.

To address this, we propose a model: Synthesized Command and Control, (SYNTHComm), which treats command as a structured integration of human and machine capabilities across all levels of autonomy. Rather than separating human and AI roles, it aligns them. In the SYNTHComm model, AI provides speed, scale, and predictive insight. Humans provide context, judgment, and accountability. The interaction between the two is continuous and deliberate. Human operators shape system outputs through interpretation and intervention, while AI supports decision-making within defined constraints. In practice, this includes mechanisms such as (1) mandatory human-in-the-loop checkpoints at defined escalation thresholds, (2) transparent model outputs, and (3) auditable decision logs.

A key feature of this model is adaptability. Levels of autonomy are treated as adjustable based on mission and risk. High-risk scenarios require deeper human involvement. Time-sensitive operations can leverage higher autonomy with safeguards in place. This approach ensures that autonomy supports command rather than displacing it. Finally, the implications are clear. Doctrine, training, and governance must evolve alongside these systems. Accountability must remain anchored in human authority, even as decision processes become more distributed.

Without doubt, AI will continue to accelerate the pace of warfare. That trajectory is set, and initial steps along that path are fait accompli. What remains to be determined is how command will adapt. We argue that maintaining effective control depends on the deliberate preservation of human judgment (and interventional capability) within the decision loop. Command, in this environment, becomes a matter of discipline; and control will require integrating machine capability without surrendering human authority, accountability and responsibility. The effectiveness of sustaining future operations in accordance with laws of armed conflict, rules of engagement, and military morality will depend on how well this balance is manifested and maintained.

Disclaimer

The views and opinions expressed in this essay are those of the authors and do not necessarily reflect those of the United States government, Department of War or the National Defense University.

Dr. Elise Annett is a Research Fellow in the Program for Disruptive Technology and Future Warfare of the Institute for National Strategic Studies at the National Defense University.

Dr. Elise Annett is a Research Fellow in the Program for Disruptive Technology and Future Warfare of the Institute for National Strategic Studies at the National Defense University.

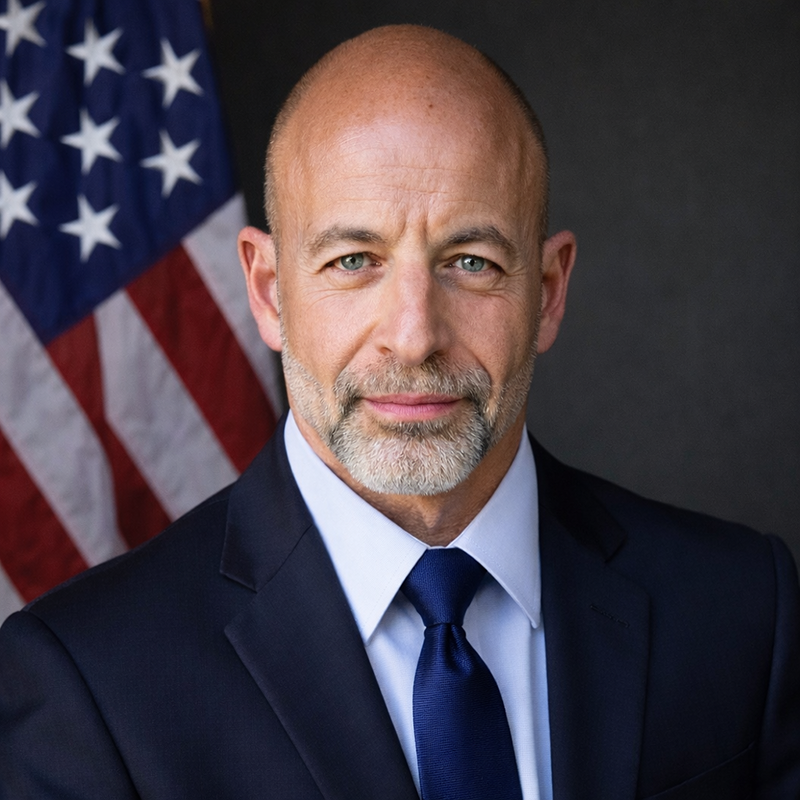

Dr. James Giordano is Head of the Center for Strategic Deterrence and Study of Weapons of Mass Destruction, and Program Lead for Disruptive Technology and Future Warfare of the Institute for National Strategic Studies at the National Defense University.